Earlier this year, Johns Hopkins University Press released Measuring Success: Testing, Grades, and the Future of College Admissions, a book that has generated considerable media attention with articles in USA Today, Inside Higher Ed, and The Wall Street Journal. The book offers substantial scholarly evidence to support claims that college admissions tests, such as the ACT and SAT, do add value to higher education generally and the college admissions process specifically.

Measuring Success also offers counter-claims from test-optional advocates, those that believe the value of standardized tests are limited. Perhaps the most compelling evidence offered by test-optional advocates is that high school Grade Point Average (GPA) is the best predictor of student retention and college graduation rates, not standardized tests. If one test, lasting approximately three hours, could be as predictive, or more predictive, than four years of prior academic achievement, that would be quite a remarkable achievement by the test makers at ACT and the College Board.

Perhaps not surprisingly, most researchers at ACT and the College Board, as well as independent researchers do seem to agree that high school GPA is the single most predictive measure of prior student achievement. Their contention is that standardized tests may add some additional value in better understanding college success.

Based upon this book, I recently invited Lynn Letukas, one of the books co-editors, and Edgar Sanchez, a contributing author, to a participate in an Astra Academy webinar. A copy of the webinar presentation may be found by clicking here.

After reading the book, it strikes me that there are at least three reasons why standardized tests may add value to higher education, and in particular, the college admissions process.

Standardized Tests are Predictive of College Success

In Measuring Success, University of Minnesota researchers Paul Sackett and Nathan Kuncel find that combining high school GPA and standardized test scores results offers a better predictor of college GPA, with a correlation just under 0.80, than high school GPA alone.

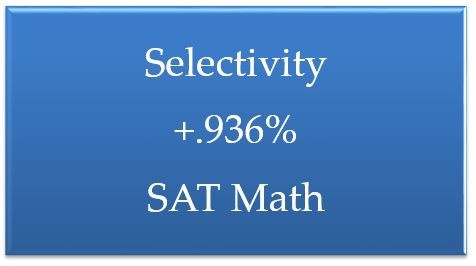

In my own research at Ad Astra, I have found that even components of standardized tests, such as the SAT-Math, can be predictive of college success on outcomes such as first-year retention. For example, among the now more than 200 institutions that have participated in the Higher Education Scheduling Index, I found that the higher the SAT-Math score for the top 25 percent of students at the institution is associated with approximately a one percent increase in an institution’s first-year retention rate. Intuitively, this makes sense as we would expect students that did well on a standardized test about math previously, might also do better than their peers in college courses, especially if one of the course is a college math course.

Figure 1 — Standardized Increase in Retention Rates by SAT Math Scores for Top 25 Percent

Standardized Tests Offer a Check Against Grade Inflation

Perhaps some of the most compelling evidence for the value of standardized tests in Measuring Success comes from a chapter on high school grade inflation. Researchers Michael Hurwitz and Jason Lee find evidence that over the past 20 years, high school grades have been increasing, as measured by high school GPA, but standardized tests scores for students have not. This is important because if high school GPA is our best predictor of student success, increases without a corresponding increase in standardized tests may signal grade dilution and that the value of an “A” or “4.00” is changing.

Hurwitz and Lee find that a student in 2016 received an approximately 0.15 GPA increase than a 1998 student who had the same SAT score. They also found that the increase has more than doubled in the past ten years. Perhaps most troubling are findings that grade inflation is increasing faster for white, Asian, wealth and private school students than those in public schools. Taken together, it appears that standardized tests might offer some type of check against grade inflation. Whether it be the ACT or SAT, all test takers are asked the same questions whether they come from predominately white, Asian, African American, or Latino schools. This meritocratic check might add value to the college admissions process as a standardized test is independently verifiable and uniform as compared to an out-of-state high school with a less certain academic reputation.

Standard Tests Offer Value Under Conditions of Discrepant Achievement

Although most students who do well in high school, tend to do well on the ACT or SAT, sometimes results are discrepant. Discrepant achievement occurs when students might have high or low high school GPA and an inversely, low or high standardized test scores. In Measuring Success, Edgar Sanchez, Senior Research Scientist at ACT, explores four reasons for why discrepant achievement could occur: (1) “Poor Tester,” good high school grades, but low admissions test scores; (2) “Underprepared Student,” good high school grades, but underprepared for college due to less rigorous course work, (3) “Good Tester,” low high school grades, but high admission test scores, and (4) “Prepared Students,” low high school grades, but academically prepared due to rigorous high school coursework.

These varying real-world conditions, which comprise about 25–30 percent of all high school students outcomes, allow researchers to study how likely students will succeed in college based upon their prior academic experience. Edgar and his colleagues find that when a student has a moderate- or high- high school GPA, but a low ACT score, they are less likely to be retained in college. These “Poor Tester” or “Underprepared Students” are also the students under test-optional policies that are most likely to withhold their scores to admissions officers.

Taken together, standardized tests, do seem to predict college success and offer higher education professionals additional insights into how likely students are to succeed in college.

In a world teeming with predictive analytics and data science breakthroughs, I am left wondering why college and university enrollment managers would shift to a test-optional policy when it appears to be less predictive of student success, lacks a check against grade inflation, and increases the likelihood of underprepared students related to discrepant achievement. At the very least, why wouldn’t any enrollment manager want additional information that could help provide insights into the likelihood of which students will be retained, and graduate on-time?

Click here to listen to the Astra Academy Webinar

Click here to listen to the Astra Academy Webinar